Phase 1: Project Goals

Progressive Meshing offers good potential as an automatic LOD generation algorithm. It may be a good terrain

continuous LOD algorithm, but this is disputed by some. Though the concentration in this project was on PLY models

(Stanford Bunny), I also ran some terrains through it for basic interest, but View Dependent Progressive Meshing is

probably a better choice for terrains.

The goal of this project was to develop a program that would perform the pre-computation of the progressive

mesh for a given object (PLY file or DEM file) then would write it out to a file which could be read by a

viewer. As part of this project, a viewer was also developed.

Progressive Meshing

View Independent Progressive Meshing (VIPM), by Hugues Hoppe, was chosen for this project. Due to the fact that

the solution is view independent, the processes chosen was to produce the progressive mesh up front and save it to

a file.

To simplify and increase the performance of final display, the decision was made to not permit collapsing to a

new vertex. Instead an edge must collapse to one of its existing vertices. This limits the vertex list required

for display to the original set of vertices and nothing more.

High Polygon Data

To obtain the high polygon

data which would help illustrate the use of Progressive Meshing, I used the PLY file reader written for the

quad/triangle stripping project. I decided to concentrate on the Stanford bunny. It provided

a small enough file that the code could be debugged while still having enough polygons to show the effects.

Quadric Error Metric

Garland and Heckbert's Quadric Error Metric for Surface Simplification was used to prioritize the edges for

collapsing. It is a relatively simple metric and fairly fast to compute. It must be penalized for a couple factors.

These are when the edge collapse would cause a "fold-over" or when it is a edge which would destory the boundary of

the object (think terrain.)

This implementation does not take into account color, texture, etc and therefore does not try to

minimize the effects in these domains. Papers have been published extending this technique to address these,

and I may add support for these extensions at a later date.

Mesh Generation Implementation

The Progressive Meshing engine was written using quite a number of STL collection classes. Vector templates were

used for maintaining lists of edges, vertices, faces, and information pertaining to all of the collapses (so the final file can be written out later.) Initially the edge priority queue was written with a priority queue, later to be replaced with a multiset. (See Performance Improvements for a discussion on this.)

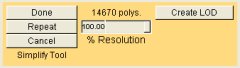

Viewer Implementation

The viewer reads in the progressive mesh file and can display it at any resolution between the lowest coded into the

file and the original resolution of the file. The file and the implementation were designed for speed with regards

to performing splits and collapses. As a result, the time it takes to switch from the lowest resolution bunny (676

triangles) to the highest (65k triangles) is less than .015 secs, on a Pentium III 800! All the work is simply

moving a couple indexes and changing a couple vertex indices.

Though the speed of collapses and splits is extremely fast, the viewer could be optimized to improve the rendering

speed. All of the triangles are rendered via looking up their vertices in the vertex list. The vertex list is not

optimized for best cache utilization, which could easily be done in either the file writer or in the file loader.

No work has been done yet to optimize this final rendering (because it isn't that slow.)

Illustrating The Usage of Levels of Detail on Polygon Models

For those who may think that this is quite a bit of work for what is seemingly a small gain, the image below

illustrates how we can reduce our polygon load for a frame greatly based upon the distance to the bunny without

losing any detectable image quality reduction.

|